by adm_etss | Oct 15, 2019 | Blog, Cyber Security, Software Testing

Needless to say, it was a quite a busy start of autumn here at Euro Testing Software Solutions. And it all started with the MESS conference in Phoenix, Arizona. However, now that we are back in the office, we went over our notes and decided to share what we found especially interesting at the conference.

- Basic needs are important: Although it’s considerably larger than Europe as a market, the US medium enterprise market seemed smaller in terms of variety of project requests, mainly consisting of lots of clients that try to find solutions to somewhat basic software testing needs. However, while the complexity of demands is not that vast, the expected scale of implementation is.

- Cybersecurity focus: We’ve noticed that most project requests for application development revolved around cybersecurity. It remains a hot topic in the US market. Following that, the most sought expertise was software testing automation and related services (DevSecOps, RPA). Lastly, there was a lot of discussion around cloud architecture and IoT.

- Every cent counts: Most IT infrastructure/ Development budgets seemed to be allocated towards clearly defined, fixed-price projects and less on broader service offerings (off-shoring, managed services etc.). All-in-one services were a tough sell.

- Trust trumps hype: Regardless of project size, having great references is key for the US market. This becomes especially relevant when new(er) products & services are introduced. We were pleasantly surprised that people remembered us from last year’s conference, and this worked as an argument for new engagements.

- Software Testing as a Pizza: Lastly, the “reinterpretation” of our services was a hit. Repackaging functional testing, regression testing, performance & security testing as types of pizza (plus positioning RPA & Automation as toppings) helped participants get a better understanding of their relevance in specific stages of an application’s lifecycle.

Fun fact: We found it very interesting that there were a lot of discussions around Arizona’s 5 key industries: cotton, cattle, citrus, copper & climate. We hope our experience can prove helpful. If you would like to know more about our mix & match software testing services, contact us for more information.

by adm_etss | Apr 3, 2019 | Blog, Software Testing

Let’s begin with an analogy about software testing. Suppose for a moment that bugs are like medical conditions (no pun intended). The process we use to identify them is like the medical one: through differential diagnosis. We detect the harmful situation and offer a course of treatment. Yet we are all familiar with situations where things can get complicated, just like in the medical field. In software testing, one of the most challenging situations we can encounter relates to a particular type of errors: the false positives and false negatives. What are they and how do we approach them?

The false positive – our tests are marked as failed even if they actually passed and the software functions as it should. We report errors even though they don’t exist. Data tells us the software should not work as intended yet it does.

From our experience, this type of error has an insidious impact. While it doesn’t affect the software itself, they tend to upset the dev’s trust in the software testing process.

Some can even begin to question the software testing company’s expertise. However, it’s usually uninspired to penalize testers for false positives (or even base KPIs on this) because it can only lead to an undesired situation – testers being scared to report them because of possible backlash. Also, keep in mind that most false positives are related to unclear situations – e.g. missing documentation. As cliché as it might sound – it’s better to be safe than sorry.

The false negative – our test are marked as passed even though they failed. We detected no problems at the moment of the test, yet they were present. The software will continue to run with glitches embedded even though it shouldn’t have.

What can happen? In a best case scenario, we detect them at a later stage of tests and fix them. Bad case: we notice them after the software has been deployed. Worse case: the bugs remain in the software for an indeterminate amount of time.

The main problem with these errors is that they can affect the business bottom line by “breaking” the software.

We think that one of the best ways of detecting false negatives is to insert errors into the software and verify if the test case discovers them (linked with mutation testing).

What can we do about it?

Some argue that reporting false positives is somewhat preferable to missing false negatives. This is because while the first keep things “internal” the second have wider business implications: from bad software to unhappy end-users.

We should keep in mind is that they are by nature hard to detect. Their causes can vary: from the way we approached the test to the automation scripts we used and even to test data integrity.

From our experience, having test case traceability in place works best to prevent both them. When was the first time the failure showed itself? Can we track it back in time? Was it linked with extra implementations? Did some software functionalities change? Does the test data look suspicious? These questions usually help us figure out which test cases were most likely affected.

All things considered, we believe it all comes down being responsible in software testing. It’s important to actually care about the test and not just do a superficial track & report.

If you think you might be dealing with false positives and negatives errors in your software tests and need some guidance, drop us a line.

by adm_etss | Mar 30, 2019 | Blog, Software Testing

Risk Based Testing is all about evaluating and pointing the likelihood of software failure. What’s the probability that the software will crash upon release? What would the expected impact look like? Think about “know-unknowns” in your software – this is what risk based testing is trying to unearth.

While it would be wonderful if we could have unlimited resources for testing – from our experience this is wishful thinking. Choices have to be made, and most of the time we go after issues that could prove critical for the business. When we define risk, we look at two dimensions as defined by HPE ALM (https://saas.hpe.com/en-us/software/alm): Business Criticality and Failure Probability. The first measures how crucial a requirement is for the business and the second indicates how likely a test based on the requirement is to fail.

Assessing risks

While there are many ways to approach risk assessment, we usually use HPE ALM because it’s a reliable tool and saves us a lot of time. It has an integrated questionnaire that allows us to determine the risk and functional complexity of a requirement and give possible values for each criterion plus a weight assigned to each value. This allows us to evaluate the testing effort and determine the best testing strategy.

In assessing risk, comparing the changes between two releases or versions is fundamental for quality assurance to identify the risk areas, reducing the total testing efforts, managing project risks, bringing lots of value with less effort and more efficient testing.

The testing team can explore the risks and provide their feedback on the test execution and whether or not to continue testing.

Advantages vs Disadvantages

For some projects, the big challenge is to accommodate the need to reduce development time, while maintaining the scope. Under these conditions, a smart risk testing approach is key in allowing the testing team to develop their software in a timely manner, making the testing effort more efficient and effective.

Dealing with the most critical areas of the system first will counteract the additional time and costs of solving those issues at a later stage in the project. And maximize on the fact that the time is spent according to the risk rating and original mitigation plans.

A faster time to market and reduction of cost per quality are more easily achievable with this risk-oriented approach.

Proper risk identification in the analysis process, prevents the negative impact that assessing a risk as too low or based on too subjective criteria, could have.

Identifying potential issues that could affect the project’s cost or outcome, create an efficient risk-based testing work and ensure better product quality.

Overall Benefits

Using a testing approach that takes risk into account, promotes some of the best practices in risk management, while conducting fewer tests with a more focused view on critical areas, higher testing efficiency, and increased cost-effectiveness.

We invite you to test these benefits out for yourself and try on this software testing approach for size. If the size fits don’t hesitate to share some of your best practices in risk assessment software with us at Euro-Testing.

Or if you are not sure what testing approach would suit you best, let us know here! And we will tailor the best solution for your needs.

by adm_etss | Feb 26, 2019 | Blog, Software Testing

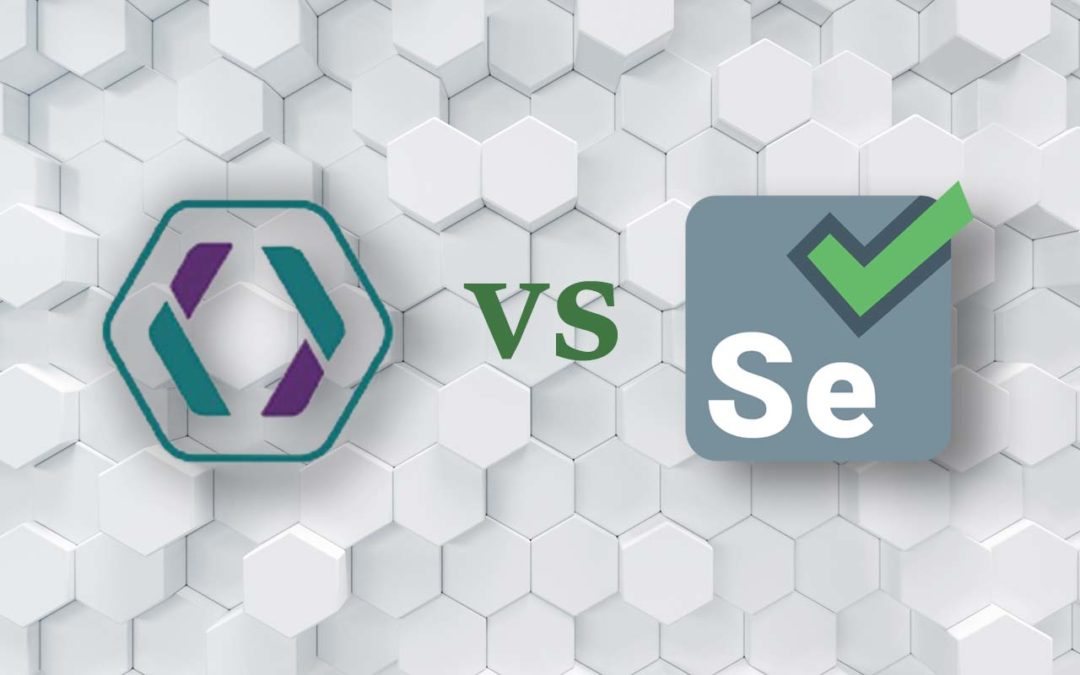

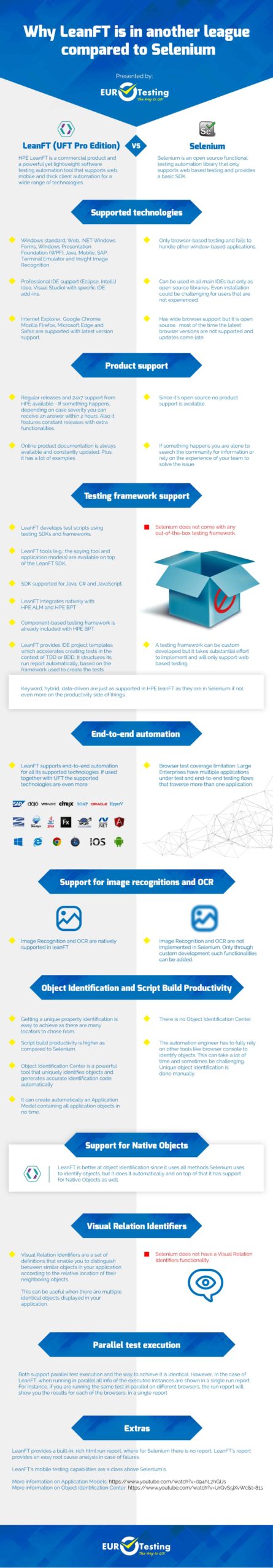

We tend to do our own benchmarking when it comes to software testing. When we put HPE LeanFT and Selenium side by side this is what it came down to.

LeanFT comes out-of-the-box with all you need to start automation efficiently and with an acceptable learning curve to balance between learning and productivity. It already has the framework that supports end-to-end automation of multiple technologies, not just web.

We think that a Selenium framework would not make a good investment at this point in time. It can be a short-term compromise in case:

- you did not purchase HPE leanFT (or UFT) already.

- you only need to do some web tests and you do not plan to put together big end-to-end regression automated suites.

- you have a highly skilled development engineers that want to write a lot of boilerplate code just to be able to provide some basic functional automation functionality

Why do we think this? Find out in the infographic bellow:

by adm_etss | Jan 20, 2019 | Blog, Software Testing

Testing is integral to software development and its role is to provide quality assurance for the final product. Without quality assurance, you risk running with a faulty product, which leads to decreased sales, damaged reputation and increased costs with fixing bugs and customer care.

So testing is a necessity for any business that hopes to optimize its operations. However, testing is not a core competency for most companies. It is often performed by a dedicated part of the team, by someone who is specialized in something different but does this out of necessity, or it is outsourced. Which one is better and why?

In-house testing vs. outsourcing

What is the difference between having an in-house tester and outsourcing the service? Regarding operations, you either have people on your team doing the testing, or you give your requirements to a third party that takes over the testing part of the project. This third party will keep in contact with you and your team for updates and progress reports.

We discuss further some of the advantages of outsourcing and explain why this might be a better option for your company than doing software testing in-house.

Quality of testing

There is a difference in skill level and quality of testing when it comes to outsourcing. Often, your team simply lacks the know-how to perform software testing at the highest standards. Your alternatives are either to invest in hiring new people with the required know-how or to spend resources on training your existing staff. Any of these two distracts your team from their main tasks, on the one hand, and, on the other hand, results in extra costs.

Outsourcing also implies a cost, but you work with specialists who have a lot of experience with similar projects and are almost always guaranteed to work better and more efficiently than an in-house team.

Standardized solutions

What happens a lot is that for every project companies develop, they have to come up with testing solutions. These customized solutions take resources to develop, and they quickly become obsolete. What’s more, new team members and managers have to spend precious time learning the ropes of the custom solutions. Outsourcing eliminates this problem by providing standardized solutions, that have been proven to work and that remain updated and constant no matter how the in-house team changes.

Spee-up projects

A result of outsourcing is getting things done quicker. Because of standardization, specialization and years of experience, companies that specialize in testing can do a better job in less time. In this way, your project is ready for the marker with no bugs or errors and with the optimal user experience in record time. No need to stress how important it is for project efficiency and for the coordination between departments not to go over established deadlines.

Decreased operations complexity

With outsourcing, you simply have less day to day hassle in managing your team and the output. Outsourcing spears you of all the trouble of adding people to your team or arranging for special training. It also takes away the need to manage this essential yet complex activity and supervise the quality of testing.

Reduced costs

You might think that having someone in-house to do the testing will surely save you some money, compared to contracting a third party service. While in the short run you might be right, in the medium and long run you risk inducing far greater costs on your department and company.

Remember that the main idea behind outsourcing of any kind is cost reduction. When you outsource, you transfer part of your operations to another company, reducing complexity for your own. Moreover, this third party is a specialist in something that you’re not, so they will be more efficient in their operations, delivering the same or better quality, at a fraction of the cost that it would take for your company to do it.

Not least, better quality testing means better quality products. And while customers will pay more for more quality, they will stop buying and spread the word if they encounter a faulty product.

For all these advantages, we encourage you to give software testing outsourcing a thought. Contact us at Euro-Testing to get more information about the solutions that would fit your company. We can help you figure out whether outsourcing is suited for your needs, as well as provide you with costs estimates and potential benefits, so you make an informed decision.

by adm_etss | Nov 25, 2018 | Blog, Software Testing

When developing software applications one of the most critical aspects is testing. Neglect this and it may lead to lack of product quality, followed by customer dissatisfaction and ultimately increasing overall costs.

One of the most common challenges for a business is to decide what type of software testing it needs based on different factors like project requirements, budget, timeline, expertise, and suitability. Since both manual and automated software testing offer advantages and disadvantages, this article will give you a glimpse into which of the two is best suited for your needs.

What is the difference?

Manual software testing means using a program or product as an end user would, making sure that all features work appropriately. It basically means that a tester has to run each individual program and set of tasks to see whether the outcomes are in accordance with the expectations.

Automated software testing uses pre-defined tools to run test cases in order to compare the results to the expected behavior. Once automated tests are created, they can easily be repeated and extended to perform complex tasks, which take longer or are impossible to run through manual testing.

When should you use manual or automated software testing?

When does it make sense to use an automated software testing tool over a manual tool? What can you gain by using automated software testing tools? Here’s a list of arguments that will help you better decide what method works best for your project.

Project requirements

Manual testing usually makes sense when you deal with small projects or have budget limitations. One of the most important advantage of manual testing is that the person performing the testing can pay more attention to details. Unlike a pre-defined script, this option allows the software that is being developed to be used as it would be upon launch, by real users. However, if you’re facing a large scale project, manual testing can turn into a tedious and time consuming process, not to mention budget consuming. In this case, automated testing proves to be both quicker and more effective because it streamlines the process and frees up resources for other core activities.

Financial implications

Manual testing might sound like a great solution cost wise. Nevertheless, while automation tools can be expensive in the short-term, they turn out to be a long-term investment. Not only do they achieve more than a human can in a given amount of time, but they also discover bugs faster. This allows your team to react more quickly, saving you both precious time and money. Moreover, automation provides greater consistency and less errors, which results in a more valuable final product, with less need for customer service.

Timeline

The main goal in software development processes is a timely release. Manual testing is practical when the test cases are run once or twice and there is no need for frequent repetition. On the other hand, if you need frequent testing, automated tests can be run over and over again at no additional cost and they are much faster than manual tests. Automated software testing can reduce the time to run repetitive tests from days to hours. This time savings translate into cost cuts for your business.

Sometimes, manual testing isn’t enough

Automated tests also allow you to test things that aren’t manually possible. For example, answering a question like ‘what if I had 500 accounts’, or ‘what if I processed ten transactions simultaneously’ can only be answered efficiently by using automated tests.

Final thoughts

Both software testing options present pros and cons. Before deciding, make sure that you consider your time, your resources, and the size of your project as well as the quality of the automated tools you’ll be using. Always remember though that combining both methods is an option. In fact, combining the two may be optimal for canceling out the others’ cons and developing the best software possible. For more in depth information on what type of testing is best suited for your project, contact Euro-Testing and we will give you an assessment of your needs and provide the best solutions for your particular situation.